- 100% US-Based Team

Your White Label Partner for SEO, PPC & Facebook Ads!

We support successful resellers, agencies, and enterprise companies with our world-class marketing fulfillment, direct API integration, and cutting-edge results.

Expertise you can trust.

Trusted by hundreds of agencies, resellers, and global enterprises

From 5-client agencies to enterprise level orgs with thousands of campaigns who need high-caliber, dependable results for their white label SEO, PPC, Facebook Ads, and TikTok Ads, we handle any niche and industry to supercharge your business and get top-notch results.

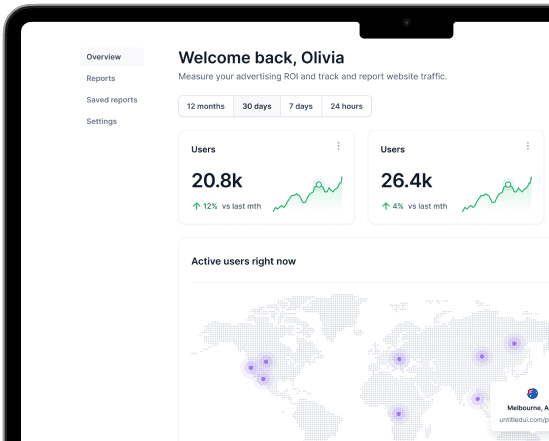

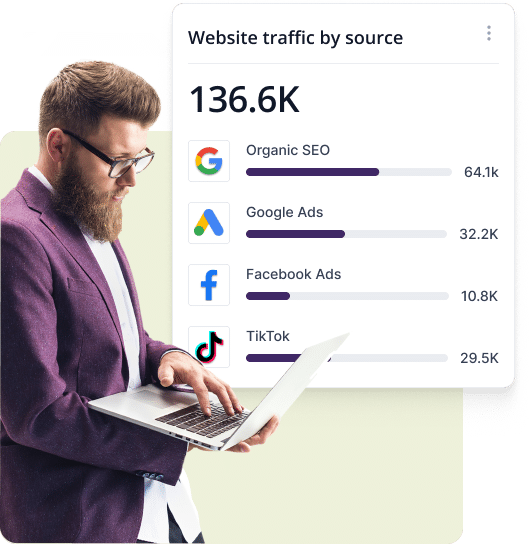

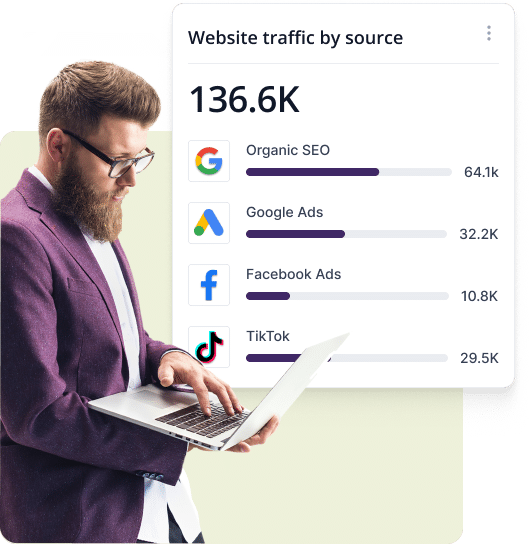

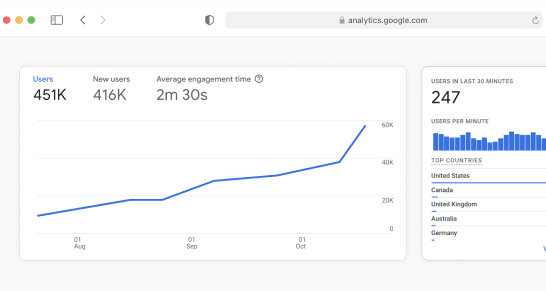

Real time reporting with Semify’s API

Campaigns & Case Studies

Don’t just take our word for it. Learn how other agencies, resellers, and enterprise partners have seen massive growth since teaming up with Semify.

Case Study / Campaign Title

Campaign

Case Study / Campaign Title

Campaign

Case Study / Campaign Title

Campaign

Case Study / Campaign Title

Case Study

Case Study / Campaign Title

Case Study

Case Study / Campaign Title

Case Study

Why Partner With Semify

100% USA-Based Experts

Dedicated Account Managers

Proven Playbook

Custom Software & Integrations

Everything You Need Under One Roof

Our team of SEO experts help get your clients ranking in organic search results with a comprehensive strategy and technically optimized site.

Resellers take advantage of our experience managing thousands of successful pay per click programs for businesses.

Offering your clients white label Facebook Ads will help them get in front of the massive amounts of people on the Meta platforms.

Semify SEO resellers can leverage Tiktok’s powerful search engine capabilities (and our expertise) to drive ad traffic for your clients.

Powerful, intentional, consistent content creation made to increase organic and paid website traffic. Every business needs content to stay competitive.

More Reasons To Partner With Semify

API integration & project management

Transparency

100% White labeled

Scaleability

Custom reporting

Custom dashboard

Are you ready for better client results without the headache?

Frequently Asked Questions (FAQs)

What is a White Label Marketing Agency?

If you’ve ever purchased a “house brand” product in a grocery store, you’re probably buying a white-labeled product! It’s essentially the same thing when you, as a marketing agency owner or marketing professional at an enterprise organization, work with a white label agency to outsource marketing services for your clients.

A white label digital marketing agency provides marketing services and deliverables (such as white label SEO, white label PPC, white label Facebook ads, and white label TikTok ads) to other agencies, rather than directly to business owners. At Semify, we create and deliver these services in a way that maintains our anonymity in favor of our resellers’ own branding, ensuring their clients never know we exist.

The bottom line? We do all the marketing fulfillment work and deliver it under your own agency’s branding. You get all the credit, and your clients get the results they want. Just think of us as a behind-the-scenes extension of your existing team.

What Are the Benefits of White Label Digital Marketing?

One of the main benefits of working with a white label agency for outsourced marketing services is that you’ll gain access to marketing expertise without having to hire, fire, or train. Rather than spend tens of thousands of dollars on finding the best candidates and paying for their salary, benefits, and other overhead costs, you can save money without sacrificing quality.

When you work with a white label digital marketing partner like Semify, you can focus on things like sales and customer retention while we handle everything on the fulfillment side. You won’t have to spend months worrying about the finer points of SEO or PPC campaign management, nor will you have to burn the midnight oil to deliver promised services on time. We’ll do all the grunt work for you so you can give your undivided attention to other crucial areas of your business. You’ll be able to take on more work so you can scale over time, rather than turn clients away or risk late delivery.

Not only does outsourcing your digital marketing help you save money, but it can also provide access to valuable tools and resources for sustainable growth. Our proprietary white label agency software will give you more control and transparency, providing you with better peace of mind and better client results.

Is Working With a White Label Agency Better Than Hiring In-House?

Most resellers find that working with a white label digital marketing agency is preferable to hiring more employees to complete client work. While it may seem like having everything under one physical roof is best, that comes with some major costs. It takes thousands of dollars just to hire one employee – and that doesn’t even account for the costs associated with wages, health benefits, paid time off, training, equipment, and office space.

Working with a white label agency helps you avoid all of the headaches associated with hiring and managing and keep costs down in a major way. All told, outsourcing your marketing needs to a white label agency like Semify can save you a significant amount of money and other resources as compared to hiring in-house.

Why Work With a White Label Digital Marketing Agency Instead of Independent Contractors?

Some marketing agencies prefer to work with freelance marketers to fulfill their clients’ needs. But while this route may seem inexpensive, there are definite downsides.

For one thing, you’ll quickly hit a cap as to how much work you can outsource to a single freelancer. They’ll be limited by their own bandwidth issues, which means you won’t have the capacity to take on new clients or deliver services quickly. What’s more, you’ll have to thoroughly evaluate your freelance candidates to ensure they’re actually able to deliver on their promises. And even if they seem great in the beginning, you won’t have much recourse if they take on too many clients or ghost you. That can leave you in the lurch and make clients more vulnerable to churn. Plus, quality control can be an issue with many independent contractors. You may have to spend more of your time editing their work or requesting status updates, which means taking time away from sales, customer service, and other core areas of your business.

With independent contractors, you’ll get what you pay for (and sometimes, you may not even get that!). Working with a white label agency means you’ll get better transparency, quality control, and guaranteed on-time delivery. Our team is 100% USA-based, so you won’t have to worry about communication drop-offs or low-quality deliverables. We’re completely accountable to our work and our partners, so you can feel confident that we make good on our promises.

Who Can Benefit From Partnering With a White Label Marketing Agency Like Semify?

Agencies of any size can benefit from outsourcing marketing services. In particular, Semify can help if you’re:

- Struggling to keep up with growing client demand

- Unable to devote time or energy to keeping up with marketing trends

- Trying to avoid hiring in-house or managing more employees

- In need of enterprise marketing tools your current vendor can’t provide

- Taking on too much work yourself but don’t want to use freelancers

- Wanting to offer more marketing services than you currently have

- Tired of handling the fulfillment side of your business yourself